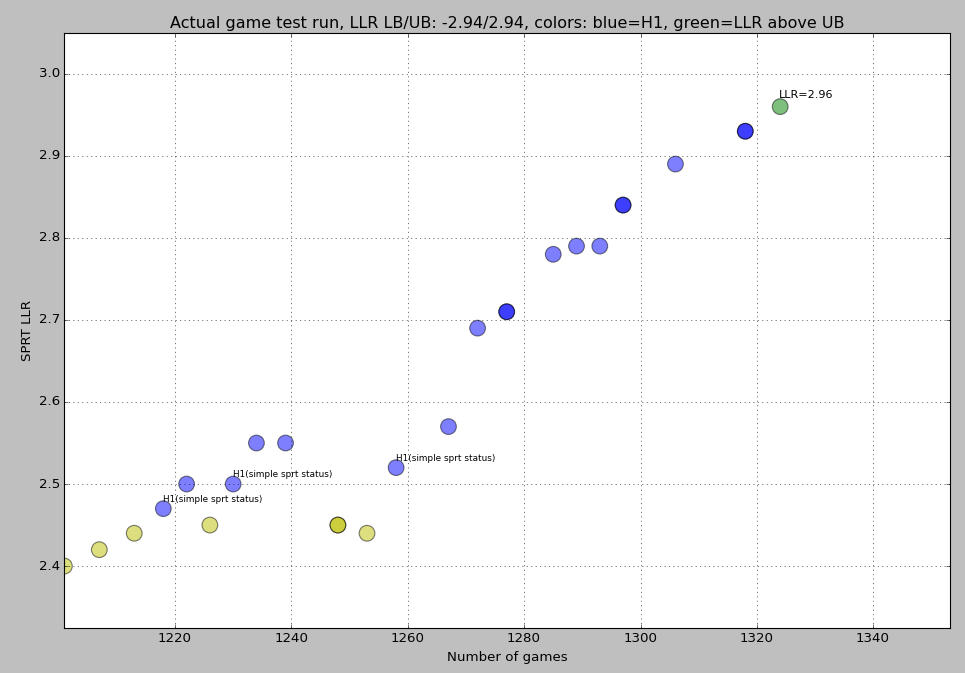

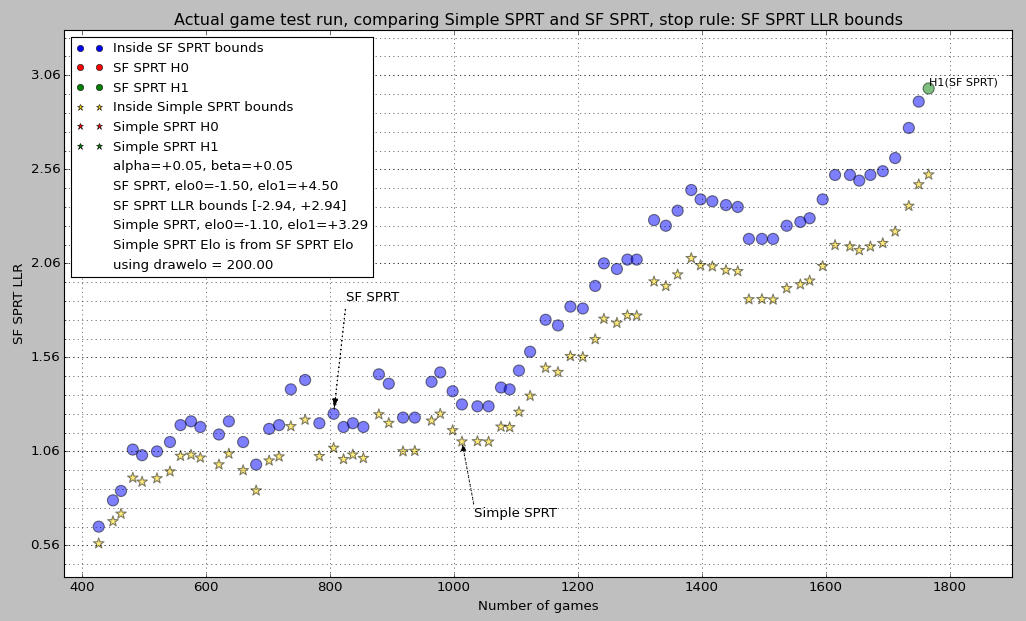

Hi Jesus. I always enjoy talking to you! The 2.4 was of course computed using SimpleSPRT which use a different principle from the SF SPRT.I do not know how you got LLR ~ 2.4, Michel.

However the results should be compatible in first approximation. And they are!

I converted the input data

Code: Select all

losses=559

draws=1271

wins=637

elo0=-1.5

elo1=4.5Code: Select all

draw_elo= 198.250953389

Bayes Elo0= -2.04375986542 Bayes Elo1= 6.13127959626Almost the same as SimpleSPRT. I claim that the result by SimpleSPRT is actually the correct one, but in practice the difference should not matter.LLR= 2.40220453233

Below is the code to compute these numbers.

Code: Select all

from __future__ import division

import math

from SimpleSPRT import SimpleSPRT

bb=math.log(10)/400

def L(x):

return 1/(1+math.exp(-bb*x))

def Linv(s):

return -math.log(1/s-1)/bb

def de_from_dr(dr):

return Linv((dr+1)/2)

def scale(de):

return (4*math.exp(-bb*de))/(1+math.exp(-bb*de))**2

def wdl(de,elo):

# bayes elo

w=L(elo-de)

l=L(-elo-de)

d=1-w-l

return(w,d,l)

def LL(de,elo,W,D,L):

# bayes elo

(w,d,l)=wdl(de,elo)

return W*math.log(w)+D*math.log(d)+L*math.log(l)

if __name__=='__main__':

losses=559

draws=1271

wins=637

elo0=-1.5

elo1=4.5

dr=(wins+draws/2)/(wins+draws+losses)

de= de_from_dr(dr)

print "draw_elo=",de

sc=scale(de)

belo0=elo0/sc

belo1=elo1/sc

print "Bayes Elo0=",belo0,"Bayes Elo1=",belo1

print "LLR=",LL(de,belo1,wins,draws,losses)-LL(de,belo0,wins,draws,losses)