Hello. I'm trying to improve again the main hash table, and specifically I'm trying to find which of the standard parameters are the most important and which less, to decide which of the 4 entries in any hash position is the best to be overwritten when the 4 are occupied.

So for each entry I have the standard

* age

* type (exact, alpha, beta)

* depth

Are there any study over any clear best strategy?

Thanks.

Hash table

Moderators: hgm, Rebel, chrisw

-

cdani

- Posts: 2204

- Joined: Sat Jan 18, 2014 10:24 am

- Location: Andorra

Hash table

Daniel José -  http://www.andscacs.com

http://www.andscacs.com

-

bob

- Posts: 20943

- Joined: Mon Feb 27, 2006 7:30 pm

- Location: Birmingham, AL

Re: Hash table

Best move is certainly critical. (I have to assume you store a score / bound, otherwise it is pointless).cdani wrote:Hello. I'm trying to improve again the main hash table, and specifically I'm trying to find which of the standard parameters are the most important and which less, to decide which of the 4 entries in any hash position is the best to be overwritten when the 4 are occupied.

So for each entry I have the standard

* age

* type (exact, alpha, beta)

* depth

Are there any study over any clear best strategy?

Thanks.

-

cdani

- Posts: 2204

- Joined: Sat Jan 18, 2014 10:24 am

- Location: Andorra

Re: Hash table

Sorry, I was not clear enough. Of course I store best move, and the key, and the eval (is Andscacsbob wrote:Best move is certainly critical. (I have to assume you store a score / bound, otherwise it is pointless).cdani wrote:Hello. I'm trying to improve again the main hash table, and specifically I'm trying to find which of the standard parameters are the most important and which less, to decide which of the 4 entries in any hash position is the best to be overwritten when the 4 are occupied.

So for each entry I have the standard

* age

* type (exact, alpha, beta)

* depth

Are there any study over any clear best strategy?

Thanks.

Daniel José -  http://www.andscacs.com

http://www.andscacs.com

-

Dann Corbit

- Posts: 12540

- Joined: Wed Mar 08, 2006 8:57 pm

- Location: Redmond, WA USA

Re: Hash table

This is what Stockfish does:

Code: Select all

void save(Key k, Value v, Bound b, Depth d, Move m, Value ev, uint8_t g) {

assert(d / ONE_PLY * ONE_PLY == d);

// Preserve any existing move for the same position

if (m || (k >> 48) != key16)

move16 = (uint16_t)m;

// Don't overwrite more valuable entries

if ( (k >> 48) != key16

|| d / ONE_PLY > depth8 - 4

/* || g != (genBound8 & 0xFC) // Matching non-zero keys are already refreshed by probe() */

|| b == BOUND_EXACT)

{

key16 = (uint16_t)(k >> 48);

value16 = (int16_t)v;

eval16 = (int16_t)ev;

genBound8 = (uint8_t)(g | b);

depth8 = (int8_t)(d / ONE_PLY);

}

}

Taking ideas is not a vice, it is a virtue. We have another word for this. It is called learning.

But sharing ideas is an even greater virtue. We have another word for this. It is called teaching.

But sharing ideas is an even greater virtue. We have another word for this. It is called teaching.

-

Dann Corbit

- Posts: 12540

- Joined: Wed Mar 08, 2006 8:57 pm

- Location: Redmond, WA USA

Re: Hash table

Gull has an interesting method. There are 3 distinct store functions for exact, high, and low

Code: Select all

void hash_high(int value, int depth) {

int i, score, min_score;

GEntry *best, *Entry;

min_score = 0x70000000;

for (i = 0, best = Entry = Hash + (High32(Current->key) & hash_mask); i < 4; i++, Entry++) {

if (Entry->key == Low32(Current->key)) {

Entry->date = date;

if (depth > Entry->high_depth || (depth == Entry->high_depth && value < Entry->high)) {

if (Entry->low <= value) {

Entry->high_depth = depth;

Entry->high = value;

} else if (Entry->low_depth < depth) {

Entry->high_depth = depth;

Entry->high = value;

Entry->low = value;

}

}

return;

} else score = (Convert(Entry->date, int) << 3) + Convert(Max(Entry->high_depth, Entry->low_depth), int);

if (score < min_score) {

min_score = score;

best = Entry;

}

}

best->date = date;

best->key = Low32(Current->key);

best->high = value;

best->high_depth = depth;

best->low = 0;

best->low_depth = 0;

best->move = 0;

best->flags = 0;

return;

}

void hash_low(int move, int value, int depth) {

int i, score, min_score;

GEntry *best, *Entry;

min_score = 0x70000000;

move &= 0xFFFF;

for (i = 0, best = Entry = Hash + (High32(Current->key) & hash_mask); i < 4; i++, Entry++) {

if (Entry->key == Low32(Current->key)) {

Entry->date = date;

if (depth > Entry->low_depth || (depth == Entry->low_depth && value > Entry->low)) {

if (move) Entry->move = move;

if (Entry->high >= value) {

Entry->low_depth = depth;

Entry->low = value;

} else if (Entry->high_depth < depth) {

Entry->low_depth = depth;

Entry->low = value;

Entry->high = value;

}

} else if (F(Entry->move)) Entry->move = move;

return;

} else score = (Convert(Entry->date, int) << 3) + Convert(Max(Entry->high_depth, Entry->low_depth), int);

if (score < min_score) {

min_score = score;

best = Entry;

}

}

best->date = date;

best->key = Low32(Current->key);

best->high = 0;

best->high_depth = 0;

best->low = value;

best->low_depth = depth;

best->move = move;

best->flags = 0;

return;

}

void hash_exact(int move, int value, int depth, int exclusion, int ex_depth, int knodes) {

int i, score, min_score;

GPVEntry *best;

GPVEntry * PVEntry;

min_score = 0x70000000;

for (i = 0, best = PVEntry = PVHash + (High32(Current->key) & pv_hash_mask); i < pv_cluster_size; i++, PVEntry++) {

if (PVEntry->key == Low32(Current->key)) {

PVEntry->date = date;

PVEntry->knodes += knodes;

if (PVEntry->depth <= depth) {

PVEntry->value = value;

PVEntry->depth = depth;

PVEntry->move = move;

PVEntry->ply = Current->ply;

if (ex_depth) {

PVEntry->exclusion = exclusion;

PVEntry->ex_depth = ex_depth;

}

}

return;

}

score = (Convert(PVEntry->date, int) << 3) + Convert(PVEntry->depth, int);

if (score < min_score) {

min_score = score;

best = PVEntry;

}

}

best->key = Low32(Current->key);

best->date = date;

best->value = value;

best->depth = depth;

best->move = move;

best->exclusion = exclusion;

best->ex_depth = ex_depth;

best->knodes = knodes;

best->ply = Current->ply;

}

Taking ideas is not a vice, it is a virtue. We have another word for this. It is called learning.

But sharing ideas is an even greater virtue. We have another word for this. It is called teaching.

But sharing ideas is an even greater virtue. We have another word for this. It is called teaching.

-

AlvaroBegue

- Posts: 931

- Joined: Tue Mar 09, 2010 3:46 pm

- Location: New York

- Full name: Álvaro Begué (RuyDos)

Re: Hash table

I haven't done anything systematic about this, but it seems to me the criteria should be

1) If one of the entries has the correct verification bits, use that one.

2) If there are entries form previous searches, write over those first.

3) In case of equality, write over the entry with the lowest depth.

After some experimentation it became clear to me that at least you always want to write the new information somewhere.

1) If one of the entries has the correct verification bits, use that one.

2) If there are entries form previous searches, write over those first.

3) In case of equality, write over the entry with the lowest depth.

After some experimentation it became clear to me that at least you always want to write the new information somewhere.

-

hgm

- Posts: 27793

- Joined: Fri Mar 10, 2006 10:06 am

- Location: Amsterdam

- Full name: H G Muller

Re: Hash table

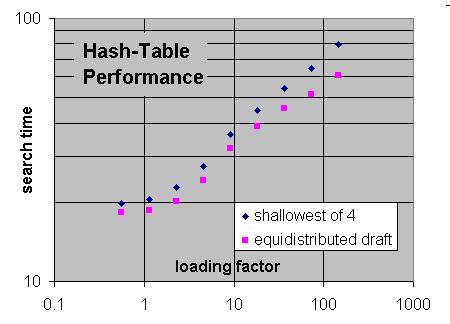

I systematically investigated this some 10 years ago. What worked best was "equidistributed depth". Apart from the obvious things (replacing an earlier entry for the same position, and replacing entries that are no longer reachable from the current root if you have that info and are not in analysis mode), replace the entry on which you get the primary hit if its depth already has more than its fair share in the depth-preferred table, and replace the lowest depth otherwise.cdani wrote:Are there any study over any clear best strategy?

To get that information I keep a histogram hashCount[MAXPLY], updated on every replacement. As in a depth-preferred table the lowest depths tend to be squeezed out, the number of entries of the lowest depth can be used as a threshold for when a higher depth is over-represented.

Code: Select all

index = hashKey & mask;

entry = hashTable + index; // primary hit

// hash probe

'entry' = KeyMatch(entry); // leaves entry unchanged if no match

...

// hash store

if(entry->lock != hashKey && entry->age != searchNr && hashCount[depth] < hashCount[1]) {

entry = LowestDepth(entry); // find lowest depth in bucket

}

hashCount[entry->depth]--;

entry->lock = hashKey;

entry->depth = depth;

entry->age = searchNr;

hashCount[entry->depth]++;

-

cdani

- Posts: 2204

- Joined: Sat Jan 18, 2014 10:24 am

- Location: Andorra

Re: Hash table

Thanks to all! I'm trying various own ideas and I will try also some of the proposals. I will explain whatever result I obtain.

Daniel José -  http://www.andscacs.com

http://www.andscacs.com

-

hgm

- Posts: 27793

- Joined: Fri Mar 10, 2006 10:06 am

- Location: Amsterdam

- Full name: H G Muller

Re: Hash table

Note that it makes sense to protect exact scores better, as they represent more search effort than upper or lower bounds. I never studied the effect of that, but I guess one should treat exact scores in the replacement decision as if they were one ply deeper.

-

cdani

- Posts: 2204

- Joined: Sat Jan 18, 2014 10:24 am

- Location: Andorra

Re: Hash table

After a lot of tests, the best code I found is this:

Sure can be improved, but I think I will keep it for the moment.

Of the examples shown in this thread, I tried hgm approach but was maybe 2 elo worst. But Andscacs stores also quiescence nodes and static eval in the hash, nodes that where keep out of the "hasCount" array and had a simpler replace schema, so probably could be improved somehow.

The

int a1 = (int)h1->age << 3;

idea from Gull seems a little bit better that simpler

int a1 = h1->age < currentage ? -200 : 0;

Now I will do a 4cpu test to be sure that it scales enough well.

Thanks all!

Code: Select all

//basic test on every entry

bool use_entry(){

if (unused entry) {

return true;

}

else {

if (keys match) {

save possible move if we don't have one;

return true;

}

}

return false;

}

void save_hash() {

struct thash *h1;

struct thash *h2;

struct thash *h3;

struct thash *h4;

struct thash *replace;

adjust mate score;

h1 = first_entry;

if (use entry(h1)) {

replace = h1;

goto end;

}

h2 = h1 + 1;

if (use entry(h2)) {

replace = h2;

goto end;

}

h3 = h2 + 1;

if (use entry(h3)) {

replace = h3;

goto end;

}

h4 = h3 + 1;

if (use entry(h4)) {

replace = h4;

goto end;

}

int a1 = (int)h1->age << 3;

int a2 = (int)h2->age << 3;

int a3 = (int)h3->age << 3;

int a4 = (int)h4->age << 3;

a1 += h1->type == exact ? 1 : 0;

a2 += h2->type == exact ? 1 : 0;

a3 += h3->type == exact ? 1 : 0;

a4 += h4->type == exact ? 1 : 0;

a1 += (int)h1->depth;

a2 += (int)h2->depth;

a3 += (int)h3->depth;

a4 += (int)h4->depth;

int i;

if (a2 <= a1) {

replace = h2;

i = a2;

}

else {

i = a1;

replace = h1;

}

if (a3 <= i) {

replace = h3;

i = a3;

}

if (a4 <= i)

replace = h4;

end:

save entry;

}Of the examples shown in this thread, I tried hgm approach but was maybe 2 elo worst. But Andscacs stores also quiescence nodes and static eval in the hash, nodes that where keep out of the "hasCount" array and had a simpler replace schema, so probably could be improved somehow.

The

int a1 = (int)h1->age << 3;

idea from Gull seems a little bit better that simpler

int a1 = h1->age < currentage ? -200 : 0;

Now I will do a 4cpu test to be sure that it scales enough well.

Thanks all!

Daniel José -  http://www.andscacs.com

http://www.andscacs.com