According to the CPW wiki, there are 64*641 inputs. Lets drop the BONA thing, and call it 64*64*10. This appears to me as being king_sq * piece_sq * piece_type, where piece_type is something like {wpawn=0, bpawn=1, ... wqueen=9, bqueen=10}.

Under this scheme however, I do not see how one would differentiate between the colour of the king. Namely, it would appear that a black king on E2 with a white pawn on E4 gets the same set of inputs as a white king on E2 with a white pawn on E4. However, through examples I can confirm this is obviously not the case.

My thought is that this cannot be true, and it must be handled by the HalfKP portion. But the HalfKP thing appears to me as just a way to handle side-to-move. All you are doing is shuffling two chunks of layer 1 neurons depending on whose turn it is. You can shuffle the layer 1 neurons all you want, but the same input bits are still set.

NNUE Question - King Placements

Moderators: hgm, Rebel, chrisw

-

AndrewGrant

- Posts: 1777

- Joined: Tue Apr 19, 2016 6:08 am

- Location: U.S.A

- Full name: Andrew Grant

NNUE Question - King Placements

#WeAreAllDraude #JusticeForDraude #RememberDraude #LeptirBigUltra

"Those who can't do, clone instead" - Eduard ( A real life friend, not this forum's Eduard )

"Those who can't do, clone instead" - Eduard ( A real life friend, not this forum's Eduard )

-

AndrewGrant

- Posts: 1777

- Joined: Tue Apr 19, 2016 6:08 am

- Location: U.S.A

- Full name: Andrew Grant

Re: NNUE Question - King Placements

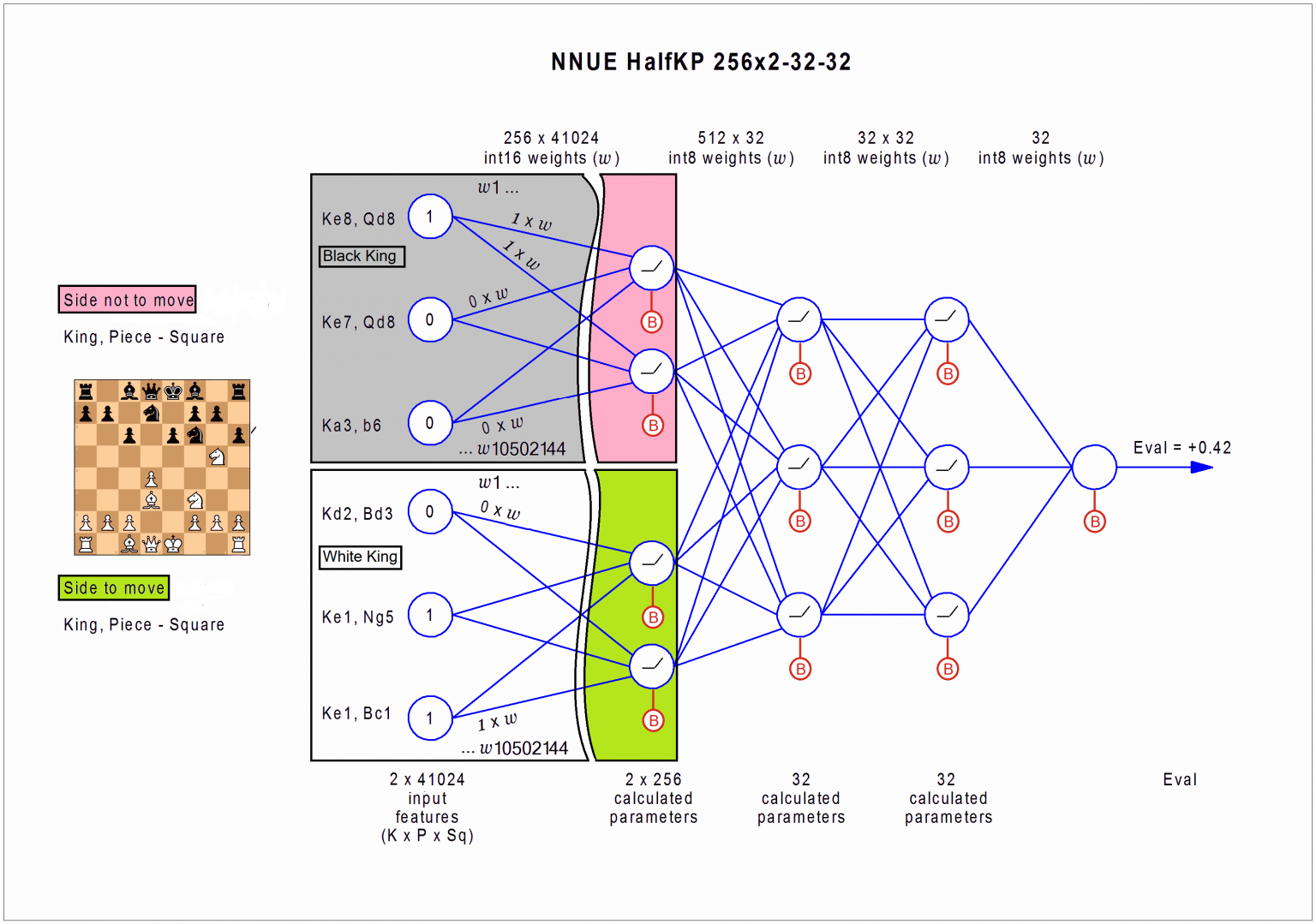

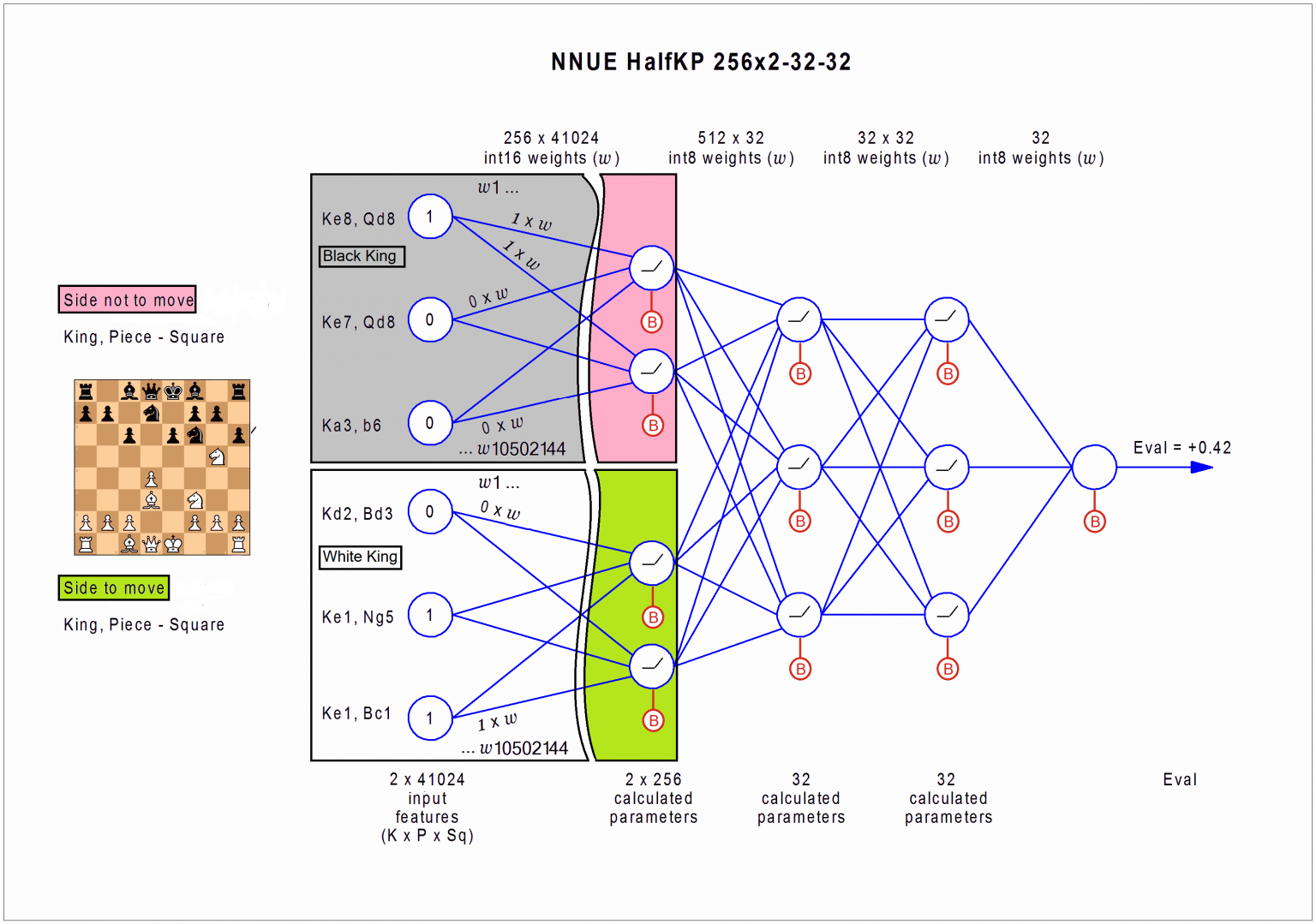

The confusion might be that this image on CPW is wrong

And that the correct one should be this one, which was somewhere in the SF Discord

And that the correct one should be this one, which was somewhere in the SF Discord

#WeAreAllDraude #JusticeForDraude #RememberDraude #LeptirBigUltra

"Those who can't do, clone instead" - Eduard ( A real life friend, not this forum's Eduard )

"Those who can't do, clone instead" - Eduard ( A real life friend, not this forum's Eduard )

-

Gerd Isenberg

- Posts: 2250

- Joined: Wed Mar 08, 2006 8:47 pm

- Location: Hattingen, Germany

Re: NNUE Question - King Placements

Thanks for pointing that out, so the labels of the weights were corrected, which are the same for both halfs.

-

hgm

- Posts: 27837

- Joined: Fri Mar 10, 2006 10:06 am

- Location: Amsterdam

- Full name: H G Muller

Re: NNUE Question - King Placements

What am I missing? I don't see any real difference between the images (except that the latter is missing the ReLu symbol in the deeper cells).

I don't see any reason why the KPST for both players should be the same (i.e. why each weight should be used twice). The situation is not symmetric, as one set of inputs is for the side to move. I would expect the same constellation of pieces to get a completely different score depending on who has the move. Especially in Shogi, where one player has the initiative, and the other player is basically in check all the time. The player that has the initiative doesn't care much about his own King Safety; as long as he is checking, he cannot be attacked. It is only useful as an insurance for the possibility that he might lose the initiative.

I don't see any reason why the KPST for both players should be the same (i.e. why each weight should be used twice). The situation is not symmetric, as one set of inputs is for the side to move. I would expect the same constellation of pieces to get a completely different score depending on who has the move. Especially in Shogi, where one player has the initiative, and the other player is basically in check all the time. The player that has the initiative doesn't care much about his own King Safety; as long as he is checking, he cannot be attacked. It is only useful as an insurance for the possibility that he might lose the initiative.

-

AndrewGrant

- Posts: 1777

- Joined: Tue Apr 19, 2016 6:08 am

- Location: U.S.A

- Full name: Andrew Grant

Re: NNUE Question - King Placements

Your concusion is that Gerd updated the CPW wiki, which updated the image I linked lolhgm wrote: ↑Fri Oct 23, 2020 5:07 pm What am I missing? I don't see any real difference between the images (except that the latter is missing the ReLu symbol in the deeper cells).

I don't see any reason why the KPST for both players should be the same (i.e. why each weight should be used twice). The situation is not symmetric, as one set of inputs is for the side to move. I would expect the same constellation of pieces to get a completely different score depending on who has the move. Especially in Shogi, where one player has the initiative, and the other player is basically in check all the time. The player that has the initiative doesn't care much about his own King Safety; as long as he is checking, he cannot be attacked. It is only useful as an insurance for the possibility that he might lose the initiative.

The difference is how the weights are labeled. One repeats the same labels twice, the other does not.

#WeAreAllDraude #JusticeForDraude #RememberDraude #LeptirBigUltra

"Those who can't do, clone instead" - Eduard ( A real life friend, not this forum's Eduard )

"Those who can't do, clone instead" - Eduard ( A real life friend, not this forum's Eduard )

-

Gerd Isenberg

- Posts: 2250

- Joined: Wed Mar 08, 2006 8:47 pm

- Location: Hattingen, Germany

Re: NNUE Question - King Placements

This was the earlier image with the confusing weight labels, Andrew initially linked to. But in the mean time the image was corrected. That's the problem with reference versus copyhgm wrote: ↑Fri Oct 23, 2020 5:07 pm What am I missing? I don't see any real difference between the images (except that the latter is missing the ReLu symbol in the deeper cells).

I don't see any reason why the KPST for both players should be the same (i.e. why each weight should be used twice). The situation is not symmetric, as one set of inputs is for the side to move. I would expect the same constellation of pieces to get a completely different score depending on who has the move. Especially in Shogi, where one player has the initiative, and the other player is basically in check all the time. The player that has the initiative doesn't care much about his own King Safety; as long as he is checking, he cannot be attacked. It is only useful as an insurance for the possibility that he might lose the initiative.

-

Gerd Isenberg

- Posts: 2250

- Joined: Wed Mar 08, 2006 8:47 pm

- Location: Hattingen, Germany

Re: NNUE Question - King Placements

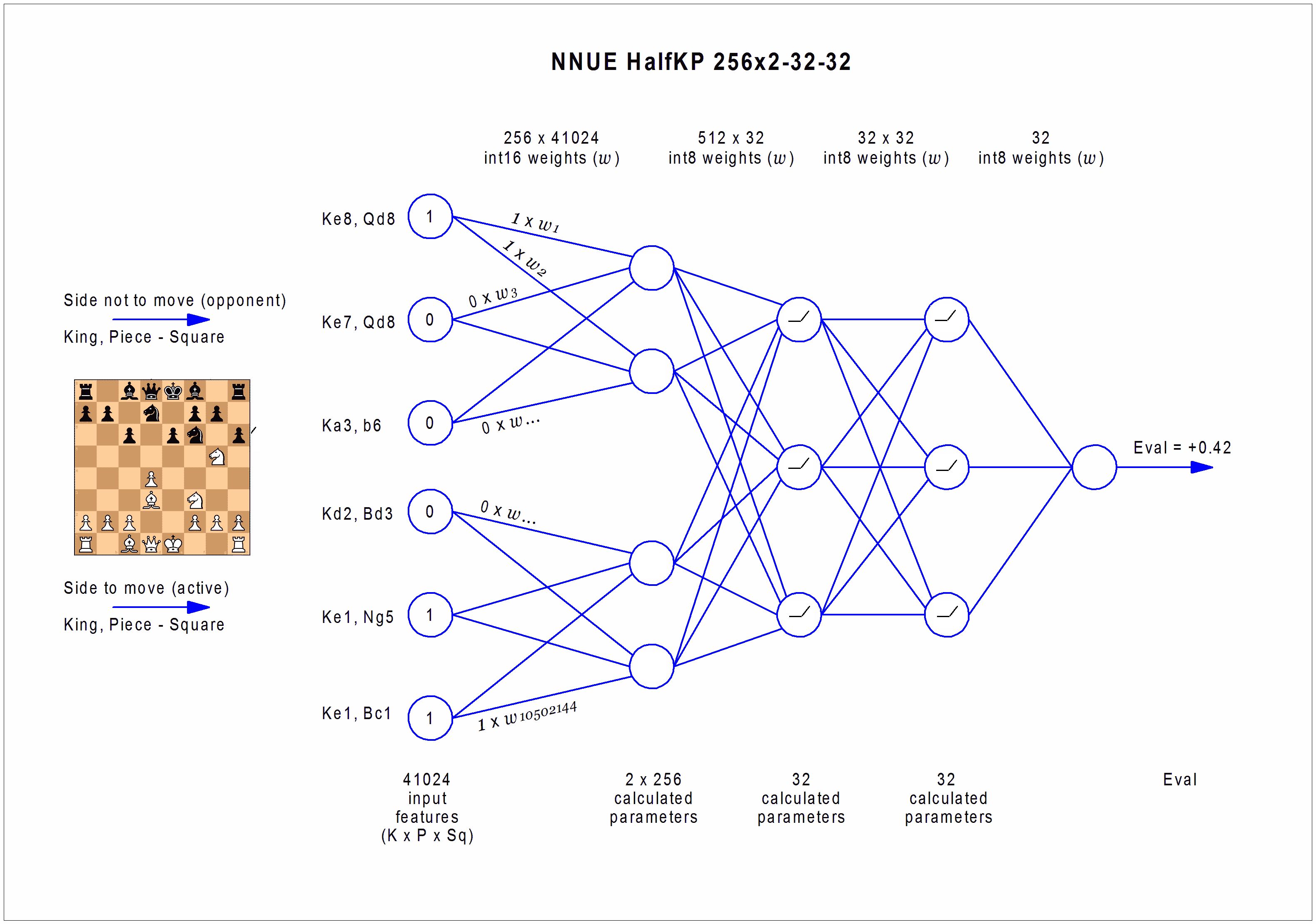

After some thinking, I believe the weights are not shared between two halves and the initial NNUE graph with its weight enumeration was more correct and I was too hasty to change it.

The upper black half has black king placement times 5 black piece types on 64 squares, and the white half, white king placement times 5 white pieces on 64 squares. Otherwise, with 10 pieces in both halves there would be 2 x 41024 inputs.

The upper black half has black king placement times 5 black piece types on 64 squares, and the white half, white king placement times 5 white pieces on 64 squares. Otherwise, with 10 pieces in both halves there would be 2 x 41024 inputs.

-

syzygy

- Posts: 5569

- Joined: Tue Feb 28, 2012 11:56 pm

Re: NNUE Question - King Placements

The weights are shared by the two halves.Gerd Isenberg wrote: ↑Fri Oct 23, 2020 10:25 pm After some thinking, I believe the weights are not shared between two halves and the initial NNUE graph with its weight enumeration was more correct and I was too hasty to change it.

The upper black half has black king placement times 5 black piece types on 64 squares, and the white half, white king placement times 5 white pieces on 64 squares. Otherwise, with 10 pieces in both halves there would be 2 x 41024 inputs.

The white-king half has 10x64x64 weights for (piece type, piece square, white-king square).

The black-king half also has 10x64x64 weights for (piece type, piece square, black-king square).

The weight are shared in that the "white-king weight" for (pt, sq, wksq) equals the "black-king weight" for (pt ^ 8, sq ^ 63, bksq) where pt ^ 8 flips the piece's color and sq ^ 63 rotates the board by 180 degrees. It is correct that sq ^ 56 would be more logical, but at the moment it is still sq ^ 63 (in Stockfish). Changing that would require a newly trained net.

-

Gerd Isenberg

- Posts: 2250

- Joined: Wed Mar 08, 2006 8:47 pm

- Location: Hattingen, Germany

Re: NNUE Question - King Placements

Thanks - so there are indeed 2x41024 inputs over both halves. But the labels in the above NNUE graph seem associated to wrong king-piece pairs,syzygy wrote: ↑Fri Oct 23, 2020 10:58 pmThe weights are shared by the two halves.Gerd Isenberg wrote: ↑Fri Oct 23, 2020 10:25 pm After some thinking, I believe the weights are not shared between two halves and the initial NNUE graph with its weight enumeration was more correct and I was too hasty to change it.

The upper black half has black king placement times 5 black piece types on 64 squares, and the white half, white king placement times 5 white pieces on 64 squares. Otherwise, with 10 pieces in both halves there would be 2 x 41024 inputs.

The white-king half has 10x64x64 weights for (piece type, piece square, white-king square).

The black-king half also has 10x64x64 weights for (piece type, piece square, black-king square).

The weight are shared in that the "white-king weight" for (pt, sq, wksq) equals the "black-king weight" for (pt ^ 8, sq ^ 63, bksq) where pt ^ 8 flips the piece's color and sq ^ 63 rotates the board by 180 degrees. It is correct that sq ^ 56 would be more logical, but at the moment it is still sq ^ 63 (in Stockfish). Changing that would require a newly trained net.

such as w1 to {ke8,qd8} and to {Kd2,Bd3} rather than {Ke8,Qe1}. Need to have a closer look to the sources, to identify where the input layer is defined.

-

syzygy

- Posts: 5569

- Joined: Tue Feb 28, 2012 11:56 pm

Re: NNUE Question - King Placements

The accumulator has a "white king" half and a "black king" half, where each half is a 256-element vector of 16-bit ints, which is equal to the sum of the weights of the "active" (pt, sq, ksq) features.

The "transform" step of the NNUE evaluation forms a 512-element vector of 8-bit ints where the first half is formed from the 256-element vector of the side to move and the second half is formed from the 256-element vector of the other side. In this step the 16-bit elements are clipped/clamped to a value from 0 to 127. This is the output of the input layer.

This 512-element vector of 8-bit ints is then multiplied by a 32x512 matrix of 8-bit weights to get a 32-element vector of 32-bit ints, to which a vector of 32-bit biases is added. The sum vector is divided by 64 and clipped/clamped to a 32-element vector of 8-bit ints from 0 to 127. This is the output of the first hidden layer.

The resulting 32-element vector of 8-bit ints is multiplied by a 32x32 matrix of 8-bit weights to get a 32-element vector of 32-bit ints, to which another vector of 32-bit biases is added. These ints are again divided by 64 and clipped/clamped to 32 8-bit ints from 0 to 127. This is the output of the second hidden layer.

This 32-element vector of 8-bits ints is then multiplied by a 1x32 matrix of 8-bit weights (i.e. the inner product of two vectors is taken). This produces a 32-bit value to which a 32-bit bias is added. This gives the output of the output layer.

The output of the output layer is divided by FV_SCALE = 16 to produce the NNUE evaluation. SF's evaluation then take some further steps such as adding a Tempo bonus (even though the NNUE evaluation inherently already takes into account the side to move in the "transform" step) and scaling the evaluation towards zero as rule50_count() approaches 50 moves.

The "transform" step of the NNUE evaluation forms a 512-element vector of 8-bit ints where the first half is formed from the 256-element vector of the side to move and the second half is formed from the 256-element vector of the other side. In this step the 16-bit elements are clipped/clamped to a value from 0 to 127. This is the output of the input layer.

This 512-element vector of 8-bit ints is then multiplied by a 32x512 matrix of 8-bit weights to get a 32-element vector of 32-bit ints, to which a vector of 32-bit biases is added. The sum vector is divided by 64 and clipped/clamped to a 32-element vector of 8-bit ints from 0 to 127. This is the output of the first hidden layer.

The resulting 32-element vector of 8-bit ints is multiplied by a 32x32 matrix of 8-bit weights to get a 32-element vector of 32-bit ints, to which another vector of 32-bit biases is added. These ints are again divided by 64 and clipped/clamped to 32 8-bit ints from 0 to 127. This is the output of the second hidden layer.

This 32-element vector of 8-bits ints is then multiplied by a 1x32 matrix of 8-bit weights (i.e. the inner product of two vectors is taken). This produces a 32-bit value to which a 32-bit bias is added. This gives the output of the output layer.

The output of the output layer is divided by FV_SCALE = 16 to produce the NNUE evaluation. SF's evaluation then take some further steps such as adding a Tempo bonus (even though the NNUE evaluation inherently already takes into account the side to move in the "transform" step) and scaling the evaluation towards zero as rule50_count() approaches 50 moves.